Building a Discord Command in Ruby on Google Cloud Functions: Part 3

This is the third of a four-part series on writing a Discord “slash” command in Ruby using Google Cloud Functions. In previous parts, we deployed a Discord app to Google Cloud Functions, and added a command to a server using the Discord API. In this part, we actually implement the command, calling an external API and displaying results in the channel. It will illustrate how to respond to commands, and how to handle secrets such as API keys in production.

Previous articles in this series:

Responding to commands

When we left off in part 2, we had created a command in a Discord server, but hadn’t yet implemented the back end. We’ll do so now.

When someone invokes a command, an interaction request of type 2 gets sent to our webhook. The JSON data sent with this request will include the command that was sent, and any options that were provided. For our command, that includes a Scripture reference.

To start off, let’s update the Responder#respond method to recognize type 2, and call a new method handle_command that we will implement:

# responder.rb

# ...

class Responder

# ...

def respond(rack_request)

raw_body = rack_request.body.read

unless verify_request(raw_body, rack_request.env)

# Discord expects a 401 response if the verification failed

return [401,

{"Content-Type" => "text/plain"},

["invalid request signature"]]

end

interaction = JSON.parse(raw_body)

case interaction["type"]

when 1

# Ping

{type: 1}

when 2

# Command

handle_command(interaction)

else

[400,

{"Content-Type" => "text/plain"},

["Unrecognized interaction type"]]

end

end

# ...

endNow for the handle_command method. Discord expects an interaction response JSON message in the HTTP response. Typically, the response includes a message that the command will then display in the Discord channel. We signal such a response by setting the “type” field to 4, and returning the text to display in a “content” field.

To start off, let’s construct a response by echoing back the Scripture reference that we were asked to look up. First we’ll write code to parse the interaction and get the option with name “reference”. For simplicity, we’ll just raise an exception (which Cloud Functions will translate to a 500 response) if the command data doesn’t have the expected format.

# responder.rb

# ...

class Responder

# ...

def reference_from_interaction(interaction)

command_data = interaction["data"]

unless command_data["name"] == "bible"

raise "Unexpected command: #{command_data['name']}"

end

command_data["options"].each do |option|

if option["name"] == "reference"

return option["value"]

end

end

raise "No reference option found"

end

# ...

endNow we’ll use that to implement handle_command to return a message to display. Remember that if we return a hash from a Cloud Function, it is automatically rendered as JSON in the HTTP response.

# responder.rb

# ...

class Responder

# ...

def handle_command(interaction)

reference = reference_from_interaction(interaction)

{

type: 4,

data: {

content: "You looked up #{reference}"

}

}

end

# ...

endNow let’s redeploy the function:

$ bundle install

$ gcloud functions deploy discord_webhook \

--project=$MY_PROJECT --region=us-central1 \

--trigger-http --entry-point=discord_webhook \

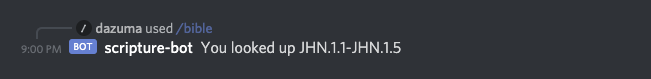

--runtime=ruby27 --allow-unauthenticatedAnd when we go back to our Discord channel and invoke the command… success!

Calling an external API

Now it’s time to implement the main functionality of our command, that of actually returning Scripture content from an API. We’ll access a suitable API, show how to manage its API key in production using Google Secret Manager, and write code to make API calls and include the results in the command response.

Signing up with API.Bible

API.Bible is a basic Scripture lookup API that boasts thousands of versions and translations, and allows reference lookup and keyword search. Their free tier includes access to public domain translations like the World English Bible, and a modest number of calls per day that will be more than adequate for my church’s needs.

I created an account and registered an application. The site claims that new accounts are subject to approval, but it looks like, since mine was for non-commercial use by a small church, it pretty much went through immediately. As part of my account, they gave me an API key. This is effectively a password to my account, and I need to handle it like any secret, keeping it out of code, source control, and any unencrypted communication.

The API website also includes “live” reference documentation that includes the ability to make API calls directly from the web page. From here, I identified the “passages” API call as the likely call I’ll need for my Discord command. The resource path for the request includes the ID of the Bible version, and the reference in a specific format. Then the response is a JSON payload including the passage content. Looks good, let’s experiment with it!

Calling the API

As we did in part 2 with the Discord API, we’ll create a simple client class for the Bible API. We’ll start with a helper method that makes HTTP calls and handles results. This helper will also set the needed API key header. As we did with the Discord client, we’ll pass in the API key as a constructor argument.

# bible_api.rb

require "faraday"

require "json"

class BibleApi

def initialize(api_key:)

@api_key = api_key

end

private

def call_api(path, params: nil)

faraday = Faraday.new(

url: "https://api.scripture.api.bible",

headers: {

"api-key" => @api_key

}

)

response = faraday.get(path) do |req|

req.params = params if params

end

body = JSON.parse(response.body)

unless response.status == 200 && body["data"]

raise body["message"] || "Unknown error"

end

body["data"]

end

endNow we’ll implement a method for the “passages” call. It will take the reference as an argument. For simplicity, we’ll just hard-code the Bible ID for a reasonable translation, the World English Bible, Ecumenical version.

# bible_api.rb

# ...

class BibleApi

WEB_BIBLE_ID = "9879dbb7cfe39e4d-01"

# ...

def lookup_passage(reference)

params = {

"content-type" => "text",

"include-titles" => "false"

}

resource = "/v1/bibles/#{WEB_BIBLE_ID}/passages/#{reference}"

response_object = call_api(resource, params: params)

response_object["content"]

end

# ...

endWe can now test this by creating a simple command-line script using Toys. As before, we’ll pass the API key in on the command line, to keep it out of our code. We’ll also pass the Scripture reference on the command line.

# .toys.rb

tool "lookup" do

flag :api_key, "--api-key TOKEN"

required_arg :reference

def run

require_relative "bible_api"

client = BibleApi.new(api_key: api_key)

result = client.lookup_passage(reference)

puts result

end

end

# ... and other scripts from beforeNote that the Bible API uses a particular format for Scripture references. We need to use that format when we test our API client:

$ toys lookup --api-key=$MY_API_KEY JHN.1.1

[1] In the beginning was the Word, and the Word was with God, and the Word was God.Calling the API from the webhook

Now that we have the Bible API working, we can write the rest of the code to integrate it into our webhook. We’ll construct a Bible API client in our Responder class, and rewrite our Responder#handle_command method to call it to get the passage content.

# responder.rb

# ...

require_relative "bible_api"

class Responder

# ...

def initialize(api_key:)

# Create a verification key (from part 1)

public_key = DISCORD_PUBLIC_KEY

public_key_binary = [public_key].pack("H*")

@verification_key = Ed25519::VerifyKey.new(public_key_binary)

# Create a Bible API client

@bible_api = BibleApi.new(api_key: api_key)

end

# ...

def handle_command(interaction)

# Call the Bible API to get the content to return

reference = reference_from_interaction(interaction)

content = @bible_api.lookup_passage(reference)

{

type: 4,

data: {

content: "#{reference}\n#{content}"

}

}

end

# ...

endOkay, we’re almost there. We have a working Bible API client, and our webhook command handler calls it to get content, and returns it to Discord for display. There’s just one problem. The Bible API client needs an API key. We’ve added an api_key argument to the Responder constructor so we can pass it down, but where does the Responder get the API key from? Remember from part 1 that a Responder is created when the function starts up:

# app.rb

require "functions_framework"

require_relative "responder"

FunctionsFramework.on_startup do

set_global(:responder, Responder.new(api_key: "???"))

end

FunctionsFramework.http "discord_webhook" do |request|

global(:responder).respond(request)

endSomehow we need to pass a secret into this app, and do so safely and securely. Let’s talk about how to do that.

Handling secrets

There are many ways to handle secrets without exposing them in our code. In the past, it has been common to set secrets in environment variables, or store them in special source files that we exclude from source control. These techniques work, but each has its flaws. Environment variables can be logged by accident or read by malicious code. Files can be accessed by anyone with access to the deployment image.

The technique we’ll implement here uses a local file for local testing, but uses a secret handling service, Google Cloud Secret Manager, for production. When we test locally, the secrets will be present in a local file that is excluded from source control, letting us test without having to make calls to a cloud service. When we deploy, however, we exclude this file, avoiding the security risk of having the file present in our deployment image. Instead, in production, we load the secret directly into memory from the Secret Manager service.

So the basic logic is: first check for a file, and if that’s not present, invoke Secret Manager. We’ll implement this logic in a new class, Secrets.

First, we’ll add the client library for Secret Manager to our Gemfile:

# Gemfile

source "https://rubygems.org"

gem "ed25519", "~> 1.2"

gem "faraday", "~> 1.4"

gem "functions_framework", "~> 0.9"

gem "google-cloud-secret_manager", "~> 1.1"Don’t forget to bundle install to ensure your Gemfile.lock gets updated.

Now let’s implement the Secrets class. In the code below, make sure you substitute the name of your Google Cloud project as the value of thePROJECT_ID constant. I’ve also chosen a name for the secret in the SECRET_NAME constant, but you can use a different name if you want.

# secrets.rb

require "psych"

require "google/cloud/secret_manager"

class Secrets

SECRETS_FILE = File.join(__dir__, "secrets.yaml")

SECRET_NAME = "discord-bot-secrets"

PROJECT_ID = "my-project-id"

def initialize

if File.file?(SECRETS_FILE)

load_from_file

else

load_from_secret_manager

end

end

attr_reader :bible_api_key

def load_from_file

secret_data = Psych.load_file(SECRETS_FILE)

load_from_hash(secret_data)

end

def load_from_secret_manager

secret_manager = Google::Cloud::SecretManager.secret_manager_service

version_name = secret_manager.secret_version_path(

project: PROJECT_ID, secret: SECRET_NAME, secret_version: "latest"

)

version_data = secret_manager.access_secret_version(name: version_name)

secret_data = Psych.load(version_data.payload.data)

load_from_hash(secret_data)

end

def load_from_hash(hash)

@bible_api_key = hash["bible_api_key"]

end

endNow we can invoke this class from our function and use it to retrieve the API key:

# app.rb

require "functions_framework"

require_relative "responder"

require_relative "secrets"

FunctionsFramework.on_startup do

secrets = Secrets.new

set_global(:responder, Responder.new(api_key: secrets.bible_api_key))

end

FunctionsFramework.http "discord_webhook" do |request|

global(:responder).respond(request)

endTo get this to work for local testing, we just need to create the “secrets.yaml” file, substituting your actual API key:

# secrets.yaml

bible_api_key: "mybibleapikey12345"Make sure it stays out of source control. I use git, so I’ll add a .gitignore file:

# .gitignore

secrets.yamlWe also want to avoid deploying this file. By default, Cloud Functions honors .gitignore when deploying, so it will do this by default. But if you don’t use git, or you have more complex requirements, you can configure which files are omitted from deployment by providing a gcloudignore file.

In production, we want to read this data from Secret Manager, so we’ll need to upload it to Secret Manager first, using the Google Cloud command line:

$ gcloud secrets create discord-bot-secrets \

--project=$MY_PROJECT --data-file=secrets.yamlThere’s one more thing we need to do. We’ve written code that loads the secrets from Secret Manager in production, but we need to grant that code access to Secret Manager. To do so, we note that when an app runs in Cloud Functions, by default it uses a particular service account called $MY_PROJECT@appspot.gserviceaccount.com to talk to Google Cloud APIs. So we need to grant that service account access to the secret:

$ gcloud secrets add-iam-policy-binding discord-bot-secrets \

--project=$MY_PROJECT --role=roles/secretmanager.secretAccessor \

--member=serviceAccount:$MY_PROJECT@appspot.gserviceaccount.comFor both the above commands, make sure the project name and the secret name discord-bot-secrets matches the corresponding names you used in the constants in the Secrets class.

Now we can redeploy our Function, and try it out.

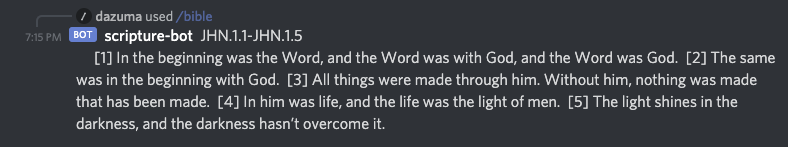

Woot!

Now what?

When I got to this point, things were mostly working, but I encountered a few issues.

The bot wasn’t very stable. Sometimes, the first time I tried running a command, I’d get a failure message, but then the next time it would work. Oddly, when I looked at the logs in Cloud Functions, everything seemed to be fine, even for the supposedly failed request.

The cause, it turns out, was that Discord will wait for a maximum of 3 seconds for a response, and if it doesn’t receive one in a timely manner, it will give up and display an error. This can sometimes be a challenge for a webhook that makes API requests to external services, if those calls may incur significant latency. It’s also a particular issue for serverless hosting. An environment like Cloud Functions might shut down your app if it’s not in use, in order to conserve resources. Then, the next time it receives a request, it might take some time to boot back up. This is called a “cold start” in serverless lingo, and it can be a challenge for app developers to keep it low. In addition to calling the Bible API, our app makes a call out to the Secret Manager during cold start, and sometimes the latency of that additional call is enough to push the entire cold start over the 3 second threshold.

Additionally, the syntax that the Bible API requires to specify the scripture reference, wasn’t very nice. Culturally, we’re used to a syntax like “John 1:1-5” rather than “JHN.1.1-JHN.1.5”. So I had to write a parser for “human” references, and convert them to the format needed by the Bible API.

Finally, displaying longer passages would also fail. There was no way, for example, to display the entire first chapter of John. Even though the Bible API would return the passage content, it turns out that Discord imposes a 2000-character limit on message length, and it refused to post a message that is any longer.

So I had a working command, but I wasn’t really satisfied with the results. Parsing reference syntax is just programming, and I’ll leave improving it as an exercise for the reader. But the latency and the longer passages were a real usability hindrance. The good news is, there are solutions, and we’ll explore them in part 4.

Notes

I work at Google in my day job, so all code in this article is:

Copyright 2021 Google LLC

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.